In Cassandra, each node manages several (token) ranges of data. Meanwhile, I will also try to clarify some of the confusion that new Cassandra users might have around the different nodetool repair command options (and related jargon’s) of different Cassandra versions. These options represent different techniques that can help achieve more effective Anti-Entropy repair, which I’ll go through in more details in this section. Over the time, different options for “nodetool repair” command have been introduced in different Cassandra versions. What can help to achieve a more effective Anti-Entropy repair? However, there do have situations when an Anti-Entropy repair is required, for example, to recover from data loss. Actually, a common trick that many people do with Cassandra repair is simply touching all data and let read-repair do the rest of work. Please see this post for an example of how this can happen.īased on the high cost related with it, a default (pre-Cassandra 2.2) sequential full Anti-Entropy repair is, in practice, rarely considered to be a routine task to run in production environment. Lastly, although not obvious, it is a worse problem in my opinion, which is that this operation can cause computation repetition and therefore waste resources unnecessarily. Second, when Merkletree difference is detected, network bandwidth can be overwhelmed to stream the large amount of data to remote nodes. Looking at this highly summarized procedure, there are a few things that immediately caught my attention:įirst, because building Merkletree requires hashing every row of all SSTables, it is a very expensive operation, stressing CPU, memory, and disk I/O. If difference is detected, data is exchanged between differing nodes. The coordinator node compares every Merkle tree with all other trees.

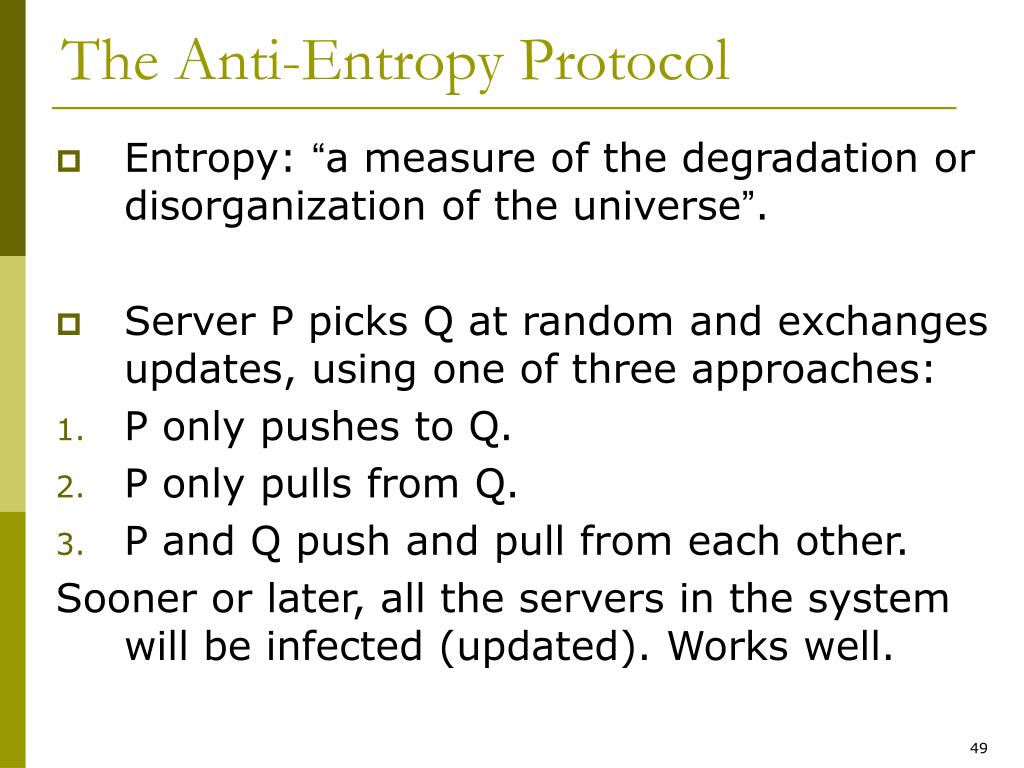

For each partition range, it sends the request to each of the peer/replica nodes to build a Merkle tree.Ģ) The peer/replica node scans all SSTables and a major, or validation, compaction is triggered, which reads every row in the SSTables, generates a hash for it, and then adds the result to a Merkle tree.ģ) Once the peer node finishes building the Merkle tree, it sends the result back to the coordinator. When this happens,ġ) The node that initiates the operation, called coordinator node, scans the partition ranges that it owns (either as primary or replica) one by one. What is the issue of an Anti-Entropy Repairīefore Cassandra version 2.2, a sequential full Anti-Entropy repair is the default behavior when “nodetool repair” command (with no specific options) is executed. In this post, I’d like to explore and summarize some of the techniques that can help achieve a more effective Anti-Entropy repair for Cassandra. In this case, how to run Anti-Entropy repair effectively becomes a constant topic within Cassandra community. For example, 1) what if a node is down longer than “max_hint_window_in_ms” (which defaults to 3 hours)? and 2) For deletes, Anti-Entropy repair has to be executed before “gc_grace_seconds” (defaults to 10 days) in order to avoid tombstone resurrection.Īt the same time, however, due to how Anti-Entropy repair is designed and implemented (Merkle-Tree building and massive data streaming across nodes), it is also a very expensive operation and can cause a big burden on all hardware resources (CPU, memory, hard drive, and network) within the cluster.

The last mechanism (Anti-Entropy Repair), however, needs to be triggered with manual intervention, by running “nodetool repair” command (possibly with various options associated with it).ĭespite the “manual” nature of Anti-Entropy repair, it is nevertheless necessary, because the first two repair mechanisms cannot guarantee fixing all data consistency scenarios for Cassandra. The first two mechanisms are kind of “automatic” mechanisms that will be triggered internally within Cassandra, depending on the configuration parameter values that are set for them either in cassandra.yaml file (for Hinted Hand-off) or in table definition (for Read-Repair). Cassandra offers three different repair mechanisms to make sure data from different replicas are consistent: Hinted Hand-off, Read-Repair, and Anti-Entropy Repair.